Second, Flores-101 is suitable for many-to-many evaluation, meaning that it enables seamless evaluation of 10,100 language pairs. First, we provide the community with a high-quality benchmark that has much larger breadth of topics and coverage of low resource languages than any other existing dataset (§ 4). With this dataset, we make several contributions. We present the Flores-101 benchmark, consisting of 3001 sentences sampled from various topics in English Wikipedia and professionally translated in 101 languages. In particular, there are even fewer benchmarks that are suitable for evaluation of many-to-many multilingual translation, as these require multi-lingual alignment (i.e., having the translation of the same sentence in multiple languages), which hampers the progress of the field despite all the recent excitement on this research direction. As a result, it is difficult to draw firm conclusions about research efforts on low-resource MT. There are some benchmarks that have high coverage, but these are often in specific domains, like COVID- 19 (Anastasopoulos et al., 2020) or religious texts (Christodouloupoulos and Steedman, 2015 Malaviya et al., 2017 Tiedemann, 2018 Agić and Vulić, 2019) or have low quality because they are built using automatic approaches (Zhang et al., 2020 Schwenk et al., 2019, 2021). These often have very low coverage of low-resource languages (Riza et al., 2016 Thu et al., 2016 Guzmán et al., 2019 Barrault et al., 2020a Nekoto et al., 2020 Ebrahimi et al., 2021 Kuwanto et al., 2021), limiting our understanding of how well methods generalize and scale to a larger number of languages with a diversity of linguistic features. By publicly releasing such a high-quality and high-coverage dataset, we hope to foster progress in the machine translation community and beyond.Īt present, there are very few benchmarks on low-resource languages. The resulting dataset enables better assessment of model quality on the long tail of low-resource languages, including the evaluation of many-to-many multilingual translation systems, as all translations are fully aligned. These sentences have been translated in 101 languages by professional translators through a carefully controlled process. In this work, we introduce the Flores-101 evaluation benchmark, consisting of 3001 sentences extracted from English Wikipedia and covering a variety of different topics and domains. Current evaluation benchmarks either lack good coverage of low-resource languages, consider only restricted domains, or are low quality because they are constructed using semi-automatic procedures. Be sure to communicate your results to the relevant audiences.One of the biggest challenges hindering progress in low-resource and multilingual machine translation is the lack of good evaluation benchmarks. Finally, interpret and act on your comparison and benchmarking results by drawing conclusions or making recommendations to close any gaps and improve performance. Once you have the data and information, compare and benchmark your results with those of your sources. This could include financial metrics, customer satisfaction ratings, operational efficiency measures, innovation indicators, or best practices examples.

After that, collect and analyze the data and information necessary to compare and benchmark your results.

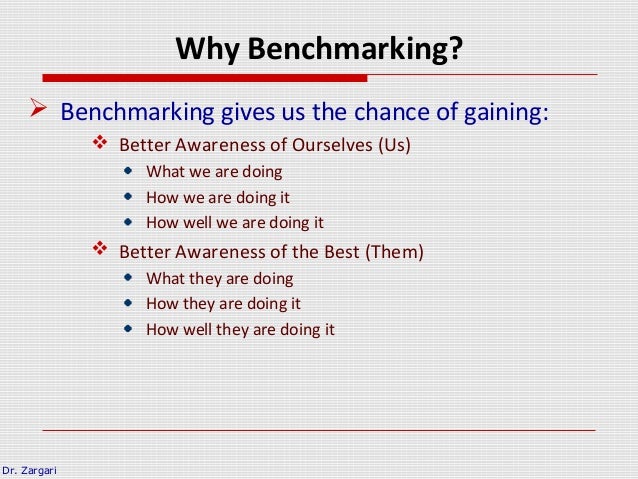

Then, identify your comparison and benchmarking sources, such as competitors or industry leaders, that are comparable, credible, and current. To begin, choose a suitable gap analysis framework that matches your needs and objectives, and allows you to compare and benchmark your results with relevant criteria or indicators. However, there are some general steps and tips to make the process easier and more effective. Comparing and benchmarking your gap analysis results is not a straightforward process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed